The Digital Divide 2.0: Inequality in the Age of AI

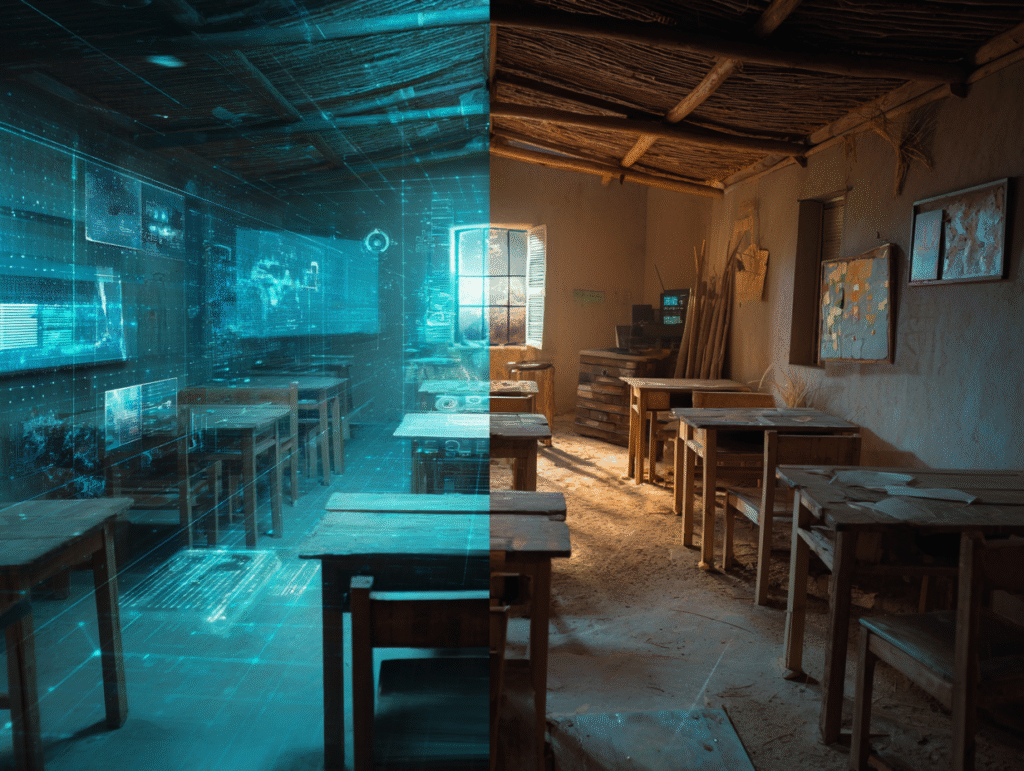

Morning. In one room, a girl taps her tablet and an AI tutor greets her by name. It remembers yesterday’s algebra mistake, adjusts the next problem, whispers a hint rather than the answer, and celebrates her small wins with data-calibrated warmth. In another room—half a world away—a boy shares a scuffed phone with his cousin. The signal flickers. An SMS quiz trickles in, two bars of service at a time. There’s no video, no voice, no adaptive path—just grit, patience, and a hope that the next message won’t fail to send.

At first glance, it seems like a matter of bandwidth and hardware. But the truth runs deeper: this isn’t only about who can connect to technology, but who can truly connect with it.

Some children are learning to use AI as a collaborator in their thinking; others are learning from systems that barely understand them. The divide is no longer just digital—it’s cognitive, cultural, and ethical.

We like to tell ourselves that technology is neutral, that it arrives like rain—falling on everyone equally. But AI doesn’t fall; it is placed: into languages that are well-represented, onto networks that are funded, inside classrooms that have trained teachers and time to integrate it. The new divide isn’t only who has devices or broadband. It’s who learns to think with AI—to question it, steer it, and turn it into a partner—versus who is quietly shaped by it, consuming whatever the algorithm serves.

This matters because childhood is architecture. The tools we give kids don’t just solve today’s homework; they scaffold tomorrow’s minds. When an AI tutor personalizes practice, a child experiences agency. When a recommendation engine auto-plays answers, a child experiences automation. One path builds curiosity and judgment; the other risks convenience without comprehension.

This is not a rich-versus-poor fable alone. Inequality runs through language (which dialects AI understands), culture (whose stories it reproduces), geography (which schools can deploy it well), gender (who is invited to build it), and ability (who gets adaptive interfaces that actually include them). It is also internal: a cognitive divide between children trained to collaborate with AI and children trained to accept it.

So the question for this generation isn’t whether AI will enter childhood. It already has. The question is how—and for whom. Will AI become a quiet escalator for the already-advantaged and a moving walkway in place for everyone else? Or can we design, teach, and govern it so that a child with only a basic phone in a remote village gains not just content, but the power to reason, to create, to push back?

In Alice in AI Land, we follow curiosity down its tunnels, but we keep our hand on the thread of ethics. This series widens our lens from the child to the world around the child: infrastructure, bias, policy, and the everyday choices adults make on their behalf. Ahead, we’ll map the real AI divide (it’s deeper than access), surface the ways inequality can be coded into “smart” systems, and then climb toward the hopeful edge—where AI truly levels.

Two classrooms, two signals, two futures. The mirror is here. What it reflects back will depend on us.

From Access to Understanding — The Real AI Divide

For years, the “digital divide” was measured in cables and screens — who had internet, who had devices, who could log on. But in the age of artificial intelligence, access itself no longer tells the full story. A child may hold a smartphone, even chat with a virtual assistant, and still remain on the wrong side of the new divide — the one between using technology and understanding it.

Nearly 2.9 billion people remain offline, most of them in developing regions. In some countries, fewer than one in five households can connect consistently. But even when the connection reaches a village, the knowledge gap often stays. UNICEF’s global survey on children and AI found sharp differences not only in access but also in trust, literacy, and purpose of use. In Argentina, more than a third of 9–11-year-olds already turn to ChatGPT for schoolwork, while millions of children elsewhere have never heard of such tools. One group learns to ask AI questions; the other waits for the answers that never arrive.

Yet the greater inequality begins after the connection. Some classrooms are teaching children to co-think with AI — to test its claims, explore its limits, and use it as a partner in problem-solving. Others, often overwhelmed by large classes or under-trained teachers, receive pre-packaged software that dictates what should be learned and when. The result is a silent stratification: one child learns how algorithms work; another learns from algorithms without realizing it.

This is the real AI divide — not a gap in electricity or hardware, but in epistemology. It’s the difference between children who are taught to see through the algorithm and those who grow up inside it. It determines whose minds are expanded by AI, and whose are quietly confined by it.

The divide is also gendered and linguistic. For every 100 boys worldwide with digital skills, only 65 girls can claim the same. And while English dominates the training data of large AI models, more than 2,000 languages across Africa remain digitally invisible. When a girl in Nairobi or Kolkata speaks to an AI that misunderstands her accent or syntax, she isn’t just facing a translation error — she’s facing a form of cultural erasure. The world’s smartest systems still struggle to hear much of humanity.

AI was once hailed as a universal amplifier of intelligence. But like any amplifier, it only magnifies what is already there. When it enters a well-funded school, it personalizes. When it enters an under-resourced one, it standardizes. The technology reflects the environment that surrounds it.

To bridge this divide, we need to redefine literacy itself. Reading, writing, and arithmetic are no longer enough. The new literacy is knowing how machines learn, and what they don’t know. Children must be taught not only to use AI but to question it — to ask why it recommends, who it represents, and whose voices it leaves out.

Because the real inequality of the AI age will not be measured in megabytes or devices, but in depth of understanding — the difference between those who program the narrative, and those who are programmed by it.

Algorithmic Bias — When Inequality Is Built Into the Code

If the last divide is about who has AI, this one is about what AI has learned about us.

And the answer, too often, is: not enough — or worse, the wrong things.

Artificial intelligence doesn’t invent its worldview from scratch; it absorbs it. Every dataset, every scraped photo, every sentence fed into a model becomes a mirror of the culture that made it. Which means when society is unequal, algorithms quietly learn those inequalities as truth.

In 2024, UNESCO tested several leading AI systems and found a pattern that was both familiar and chilling. Ask a model to generate a story about a woman, and it was four times more likely to place her in domestic scenes than a man. Ask for a “British man,” and he might emerge as a doctor, a banker, or a professor. Ask for a “Zulu man,” and he was more likely to appear as a gardener or security guard. Twenty percent of the stories about Zulu women cast them as domestic workers.

No one programmed that prejudice. It leaked in from the data — from a world still tilted in its portrayals, its languages, and its access to representation.

These distortions may seem abstract, but they echo through childhood. Millions of children now use AI-generated stories, chatbots, and image tools for homework and entertainment. Each biased output becomes a quiet tutor of perception. The child who sees AI repeatedly assign women to kitchens or Africans to menial labor learns a hidden curriculum: that intelligence, success, and creativity belong elsewhere. As UNESCO warned, even small biases in generative AI “can significantly amplify inequalities in the real world.”

Bias also hides in the mundane: in translation apps that erase nuance, in voice assistants that fail to recognize local accents, in image models that default to light skin when asked for a “professional portrait.” When AI doesn’t understand you, it subtly tells you that you are the one outside its world. For a child, that exclusion can cut deep — a reminder that the digital universe, too, has borders.

And sometimes, bias doesn’t just whisper; it rules.

In 2020, when the United Kingdom canceled national exams due to COVID-19, an algorithm was tasked with predicting students’ grades. It used past school data to infer performance — assuming that history was a fair judge. It wasn’t. The model downgraded nearly 40% of students, disproportionately hurting those from poorer schools, while boosting the results of elite private institutions. The outcome was so unjust that students flooded the streets chanting, “F** the algorithm!”* until the government reversed the system.

The lesson was clear: when algorithms inherit inequality, they automate it.

This is the darker side of AI’s precision — it can replicate bias with mathematical confidence. A single flawed formula can transform social hierarchies into “objective” predictions, entrenching privilege behind a veneer of neutrality.

Philosophers now call this digital colonialism: the quiet export of one culture’s data, values, and language dominance into the global technological bloodstream. English-trained AIs “understand” more about London than Lagos, more about Paris than Phnom Penh. Entire ways of thinking — oral traditions, indigenous metaphors, non-Western reasoning styles — vanish in translation. When those children grow up consulting AI tutors that don’t reflect their worlds, they learn to adapt to the machine, not the other way around.

So, inequality in the AI age is not only who owns the technology — it’s whose worldview the technology owns.

If we want children to see themselves clearly in this digital mirror, we must first teach the mirror to see them.

Hope in the Cloud — AI as an Equalizer (If We Let It)

For every cautionary tale about bias, there is a story of transformation — of technology meeting need, not privilege.

Because the same algorithms that can replicate inequality can also, if guided well, repair it.

In Kenya, a student named Aisha doesn’t have broadband or a laptop. What she has is a small Nokia phone and Eneza Education, a platform that delivers AI-powered tutoring through basic SMS. The system adapts to her answers — easier when she struggles, harder when she improves — and it speaks her language. In a classroom of seventy, that kind of personalization was once unthinkable. Now it’s as close as a text.

Across the continent, uLesson in Nigeria offers pre-downloaded AI learning videos that function offline, adjusting to each child’s learning speed. Kolibri, developed by Learning Equality, goes further — translating educational materials into local languages and using machine learning to recommend lessons based on what a community’s students need most. The software doesn’t assume English fluency or Western curriculum standards. It learns the rhythm of the village.

And for children with disabilities, AI has opened doors that once seemed sealed shut. Speech-to-text programs now help deaf students participate in class discussions in real time. Image-recognition apps describe visual diagrams for blind students, and new text-to-sign-language AIs are translating lessons into gestures for the first time. Out of the world’s 240 million children with disabilities, most live without access to adaptive materials — and yet AI, when trained inclusively, can give them a voice, a presence, and an equal chance to learn.

Even in the global infrastructure battle, AI is turning data into action. Project Giga, led by UNICEF and the International Telecommunication Union, uses machine learning and satellite imagery to map every school on Earth, revealing which ones remain offline. Over a million schools have been identified so far, and governments are using these maps to prioritize broadband expansion. For the first time, we’re not guessing where the digital divide lies — we can see it.

These stories carry a common theme: AI becomes empowering only when designed for the margins first. When innovation starts with the child who has the least, it tends to lift everyone else too.

Some thinkers now ask whether AI access itself should be considered a human right — a necessary extension of the right to education. Because if knowledge is increasingly mediated by intelligent systems, then denying a child the ability to use them safely and effectively is to deny them participation in the modern world. The argument isn’t about gadgets or apps; it’s about agency.

The promise of AI as an equalizer rests on three principles:

- Local adaptation — technology that speaks the user’s language and reflects their culture.

- Accessibility by design — systems that include children with disabilities from the start, not as an afterthought.

- Ethical guidance — teaching AI empathy, fairness, and awareness of difference.

If those principles hold, the same code that once divided could become the bridge.

A child like Aisha may never meet the engineers who built her tutor, but through their choices — to design ethically, and inclusively — she’s been handed something priceless: a fair chance to learn.

The Global Classroom — Policy, Education, and the Race to Catch Up

AI is spreading faster than any educational reform in history — but education itself is struggling to keep pace.

The tools are already in children’s hands; what’s missing are the policies, ethics, and trained adults to guide their use.

Around the world, governments and organizations are scrambling to turn principle into practice. In 2019, UNESCO gathered education ministers and technologists to draft what became the Beijing Consensus on AI in Education. Its message was clear: AI must enhance, not replace, human intelligence. It must reduce inequality, not reproduce it. Two years later, in 2021, UNESCO’s member states adopted the Recommendation on the Ethics of Artificial Intelligence — the first global framework to demand transparency, bias monitoring, and respect for cultural diversity in AI design. It is a quiet revolution in policy: a reminder that even in code, ethics must precede efficiency.

UNICEF has gone one step closer to the classroom. Its Policy Guidance on AI for Children outlines how schools, companies, and governments can uphold children’s rights in an automated world. In pilot programs across multiple countries, UNICEF works with educators to test these principles in practice — ensuring AI used in schools respects privacy, fairness, and inclusivity. Together with the International Telecommunication Union, UNICEF also leads Project Giga, using AI mapping to connect the world’s unconnected schools. Its aim: to ensure that no classroom, however remote, remains offline.

Some nations have started integrating these ideas into their own curricula. Rwanda’s Digital Skills Program trains youth to build local AI solutions — an African model of “AI from the ground up.” In Europe, countries like Finland and Estonia have introduced national AI-literacy modules in high school, teaching not just how to use AI, but how to question it. In the United States, school districts are beginning to weave AI awareness into digital citizenship courses, though the implementation is uneven. A 2024 study found that affluent suburban schools were significantly ahead of rural and high-poverty districts in AI adoption. The gap is widening at the policy level too: those with the most resources are defining what “AI in education” means for everyone else.

Teachers, caught in the middle, often feel the pressure most. Many see AI’s potential to reduce administrative burden or personalize learning — but few have received any formal training. Surveys show that fewer than one in five K–12 teachers actively use AI in class, while over a third of school districts plan to introduce workshops soon. The coming years will decide whether teachers become AI’s ethical gatekeepers or its bystanders.

Beyond national borders, a quieter collaboration is unfolding. Initiatives like AI4D (AI for Development) Africa and the EDISON Alliance (led by the World Economic Forum) unite governments, researchers, and NGOs around a shared mission: closing the AI literacy and infrastructure gap. These partnerships matter because AI inequality cannot be solved within one country’s borders; it is a networked problem. A child in rural Kenya or inland Turkey may rely on the same model trained in Silicon Valley — and if that model doesn’t understand their world, the inequality follows the code.

The challenge is not only logistical but philosophical.

Education systems were built for certainty — syllabi, tests, predictable outcomes. AI, by nature, thrives in ambiguity. It shifts faster than curricula can adapt. This mismatch leaves policymakers chasing a moving target: how to prepare children for a future defined by technologies that evolve while they sleep.

And so the global classroom stands at a crossroads. One path continues the old pattern — fragmented reforms, untrained teachers, and algorithms imported without context. The other demands a radical rethinking of what it means to educate in an intelligent age: teaching curiosity, ethics, and adaptability as core skills, not electives.

If education systems rise to this challenge, AI could become not just another subject but a lens through which all subjects are reimagined.

If they fail, AI will teach — but not necessarily what we want children to learn.

Co-Thinking vs. Being Shaped — The New Cognitive Divide

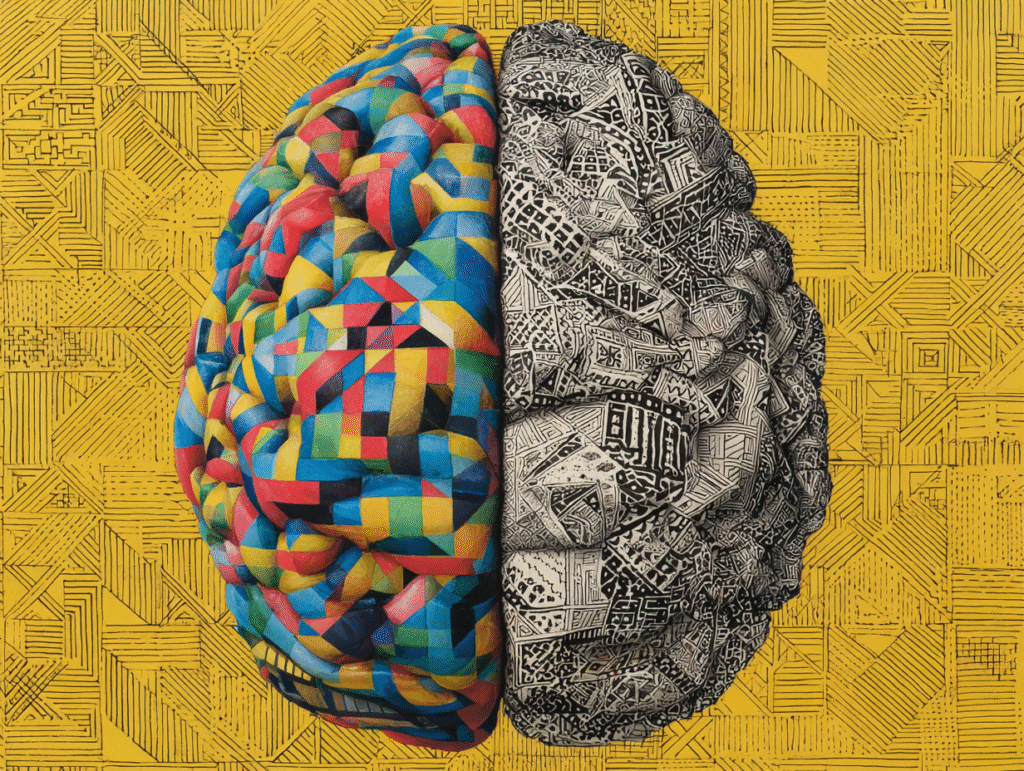

Every technological leap in history has redrawn the map of human ability — fire gave warmth and weaponry, printing gave memory external form, the internet gave speed to knowledge. But AI gives thought itself a mirror, and not every child learns how to look back into it.

We are witnessing the birth of a cognitive divide — a split not in hardware, but in how minds grow around technology. On one side are children who learn to co-think with AI: they use it to extend curiosity, challenge assumptions, and build ideas larger than their own. On the other side are those who grow up shaped by AI — passive recipients of auto-generated answers and algorithmic routines. Both groups are “digital natives,” but only one is truly mentally native to the world of intelligent machines.

Picture two teenagers. One uses an AI assistant like a sparring partner: debating ethics, testing hypotheses, designing music or poetry in conversation with code. She has learned to distrust easy answers — to treat the AI as an instrument, not an oracle. The other scrolls endlessly through personalized feeds, her attention sculpted by invisible recommendation engines. Her choices feel spontaneous but are gently pre-decided. Her creativity loops inside an algorithmic echo chamber. The difference isn’t intellect — it’s agency.

This divide grows from the earlier one we traced: access, literacy, and bias. A wealthy school may teach students how AI works — datasets, logic, bias detection — cultivating an instinct to question. Meanwhile, in underfunded or overwhelmed systems, AI may arrive as a black box: efficient, unquestioned, and absolute. There, students learn dependence, not discernment. The gap widens not only in knowledge, but in epistemology — in how children come to know what they know.

AI ethicists describe this as a “split in cognitive sovereignty.” Those who shape algorithms retain authorship over their worldview; those who merely consume their outputs risk mental homogenization. In practice, this means the next generation’s thinkers may emerge from a smaller and smaller fraction of the population — those taught to think with machines rather than through them.

But this isn’t destiny; it’s curriculum.

AI literacy is the new civic education. It means teaching children not only to code, but to ask: Who trained this model? What data shaped its beliefs? What voices are missing? It means encouraging students to play with AI creatively, but also to notice when it starts to play them. The best classrooms now integrate this self-awareness: they assign projects where students critique AI’s biases or rewrite its outputs to reveal blind spots. These small acts of resistance are, in fact, acts of authorship — reclaiming the right to co-create meaning with technology.

For parents and teachers, nurturing this kind of literacy is less about software and more about spirit. It begins with curiosity, skepticism, and empathy — the very traits AI cannot yet replicate. When a child learns to see the machine as a partner with limits, they inherit something more powerful than intelligence: perspective.

Because the danger isn’t that AI will outthink us — it’s that we’ll stop thinking without it.

And the solution isn’t to banish AI from childhood, but to teach children how to stand beside it without losing themselves in its reflection.

Bridging the Gap — Building AI Literacy and Ethical Inclusion

If inequality is not inevitable, then inclusion must be intentional.

Bridging the AI divide will not happen through optimism alone — it requires design, discipline, and empathy coded into every level of education and policy.

The work has already begun, though unevenly. Around the world, educators, technologists, and policymakers are sketching blueprints for a future where every child can think freely with intelligent tools. These efforts share one belief: the ethical use of AI begins long before the first line of code — it begins in the classroom.

1. Infrastructure: The Foundations of Equality

Before a child can learn with AI, they must be able to reach it. Projects like UNICEF and ITU’s Giga initiative are using machine learning and satellite data to map unconnected schools — more than a million so far — revealing where investment is most needed. This kind of transparency transforms empathy into logistics. Each dot on the digital map represents not a statistic, but a child waiting for a signal.

But connection alone isn’t enough. Devices must be usable, power reliable, and content relevant. Too many education systems import Western apps wholesale — elegant interfaces that speak none of the local languages. Localization is equity. AI must speak the mother tongue of the child who uses it, understand her idioms, and mirror her world.

2. Education: The New Literacy

Teaching AI literacy isn’t about turning every child into a programmer — it’s about turning them into participants.

This means understanding how AI works (and fails), recognizing bias, and learning to question outputs with confidence. Some schools already practice this. In Finland, students run “bias hunts,” testing how generative AI portrays different genders or ethnicities. In Rwanda, the Digital Skills Program trains youth not just to use apps but to build culturally relevant AI tools themselves. These classrooms produce creators, not consumers.

For teachers, the transformation is equally profound. They are no longer gatekeepers of knowledge but curators of discernment. Training programs for educators — from Europe to Sub-Saharan Africa — are slowly shifting toward AI ethics, creative pedagogy, and critical thinking. The goal is not to replace the teacher, but to give them a second mind, one that amplifies their reach without eroding their humanity.

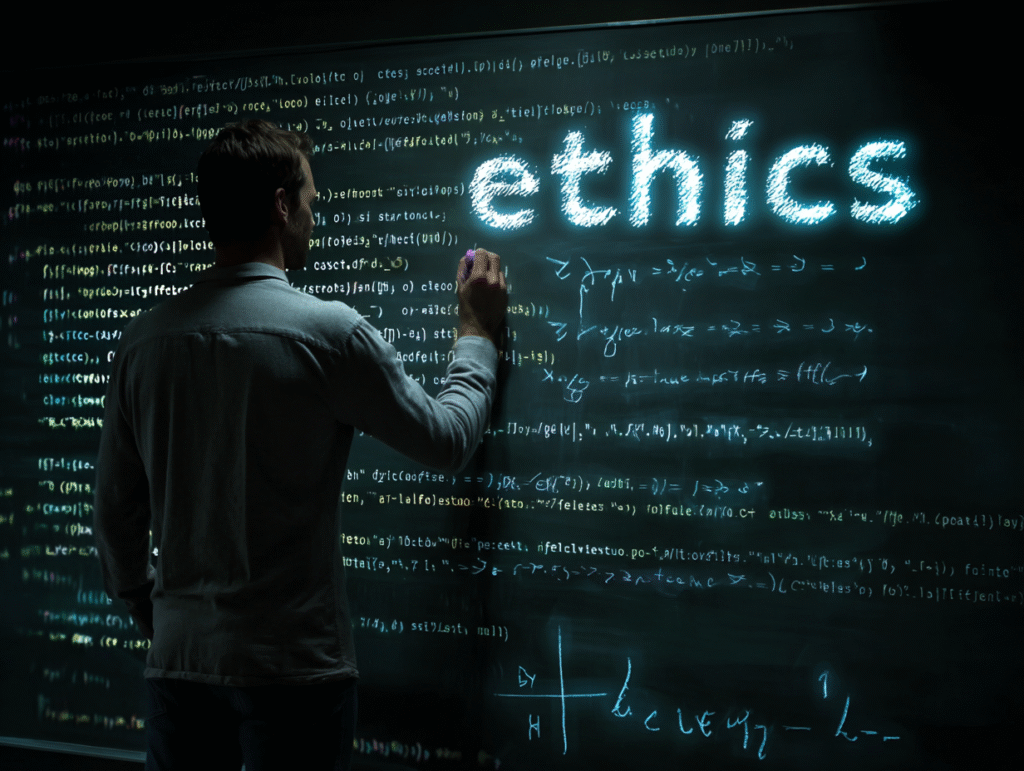

3. Governance and Ethics: Guardrails for the Future

The next frontier is moral architecture.

The EU’s AI Act now designates educational and child-facing AI as high risk, demanding transparency and oversight. UNESCO’s Ethics of AI Recommendation (2021) calls for diverse development teams and continuous bias audits — a living standard, not a static law. And UNICEF’s AI for Children guidelines provide a global north star: AI must always act in the best interests of the child.

Ethics, however, cannot live only in regulation. It must be taught as a habit of thought. Imagine AI lessons where students co-design chatbots that prioritize kindness, or create small datasets that model fairness instead of prejudice. These exercises turn morality into muscle memory.

4. Participation: Designing With, Not For, Youth

The most radical idea in this movement is also the simplest: let children help shape the AI that shapes them.

Some pilot projects already invite students to train small models for local problems — like flood prediction, language preservation, or wildlife tracking. When youth see their data, culture, and ideas encoded into the machine, they stop being subjects of technology and become its co-authors.

This is AI literacy as empowerment — not just learning how algorithms work, but realizing you can rewrite them.

The bridge, then, is not made of silicon or policy alone. It is built from trust — between teacher and student, engineer and community, child and machine.

Because equity in AI is not just about sharing access; it’s about sharing authorship.

And perhaps, if we teach children not only how to use AI but how to humanize it, they will build systems gentler than the ones we gave them.

Closing — Alice’s Reflection

Night again, and every screen becomes a classroom. The glow of a single monitor lights a child’s face — half curiosity, half dream. She asks the machine a question not from her textbook, but from her heart: “Why do you know so much about me, and so little about where I live?”

The cursor blinks, thinking. The answer never quite comes.

This is where our journey through Alice in AI Land returns — to the mirror that talks back. In its surface we see not only data and code, but the reflection of our collective choices.

Because AI is not a destiny written by engineers; it is a language written by humanity. Every algorithm carries the fingerprints of its teachers. Every bias echoes a forgotten voice. Every opportunity shared, or denied, redraws the boundaries of who gets to imagine the future.

Alice steps closer to the screen. In one reflection, she sees children learning beside AI — questioning it, reshaping it, teaching it empathy. In the other, she sees children staring silently at answers they did not choose, their thoughts gently molded by systems they cannot see. The two reflections merge and blur — potential and peril intertwined.

If the first half of this century belongs to AI, then the second must belong to those who learn to humanize it. To build empathy into logic, and conscience into code. To ensure that a child’s accent, language, or poverty never becomes an algorithmic disadvantage.

So the challenge before us is not just technological, but moral and imaginative:

Can we raise a generation that sees AI not as magic, but as mirror?

Can we teach them that the mirror listens — and that they have the right to speak back?

The future of equality will depend on that conversation.

As Alice closes her laptop, the reflection fades — leaving only her, the quiet hum of the machine, and a question that feels like both warning and hope:

“Will the next child be shaped by this mirror… or will she shape it herself?”

Frequently Asked Questions

What is the “AI divide,” and how is it different from the traditional digital divide?

The traditional digital divide was about access — who had devices, internet, or electricity.

The AI divide goes deeper. It’s about understanding and authorship — who learns to co-think with AI versus who becomes passively shaped by it. It separates children who use AI critically and creatively from those who only consume its output.

How does algorithmic bias affect children and education?

AI systems learn from human data — which often carries social and cultural bias.

When these systems generate stories, images, or grades, they may reinforce stereotypes or exclude underrepresented groups. For children, this can subtly teach inequality as normal. Building diverse, transparent datasets and teaching AI literacy in schools are key to breaking that cycle.

Can AI actually help reduce global inequality?

Yes — if designed ethically.

AI can personalize education, translate learning into local languages, and reach children in remote or underserved areas through low-bandwidth solutions.

Projects like Eneza Education, Kolibri, and UNICEF’s Project Giga already show how inclusive AI can bridge educational and geographic gaps.

What role should teachers and parents play in the AI era?

Teachers are becoming ethical guides rather than gatekeepers of information.

They help children question algorithms, spot bias, and use AI as a tool for exploration rather than dependency.

Parents can nurture curiosity and balance — encouraging kids to ask not only “What did the AI say?” but “Why did it say that?”

What is AI literacy, and why is it important for children?

AI literacy means understanding how intelligent systems work, what data they use, and how to question them responsibly.

It’s the new civic skill of the century — as essential as reading or writing. Teaching AI literacy early helps children protect their privacy, spot manipulation, and participate in the digital world as creators, not consumers.

How can policymakers and tech companies bridge the AI gap ethically?

They must invest in infrastructure, inclusion, and oversight:

Provide affordable internet and devices for all schools.

Fund AI tools localized for different languages and cultures.

Require bias audits and transparency in educational AI systems.

Collaborate with communities so technology reflects the children it serves.

True progress depends on empathy written into policy as much as code.

Why is empathy important in AI design for children?

Because data alone can’t teach compassion.

Empathy ensures that AI respects human difference — not just efficiency.

When algorithms are trained to value fairness, accessibility, and cultural understanding, they stop being mirrors of inequality and start becoming bridges of opportunity.

What is Alice in AI Land, and why does it explore these themes?

Alice in AI Land is an ongoing educational and philosophical project that explores how artificial intelligence intersects with childhood, learning, and ethics. Inspired by the curiosity and wonder of Alice in Wonderland, it invites readers to travel through the surreal landscape of the digital age — where algorithms, data, and imagination meet.

Each article acts as both a guide and a mirror, helping parents, educators, and thinkers navigate AI’s influence on the next generation.

Because understanding AI is not just about technology — it’s about what kind of humanity we choose to teach alongside it.