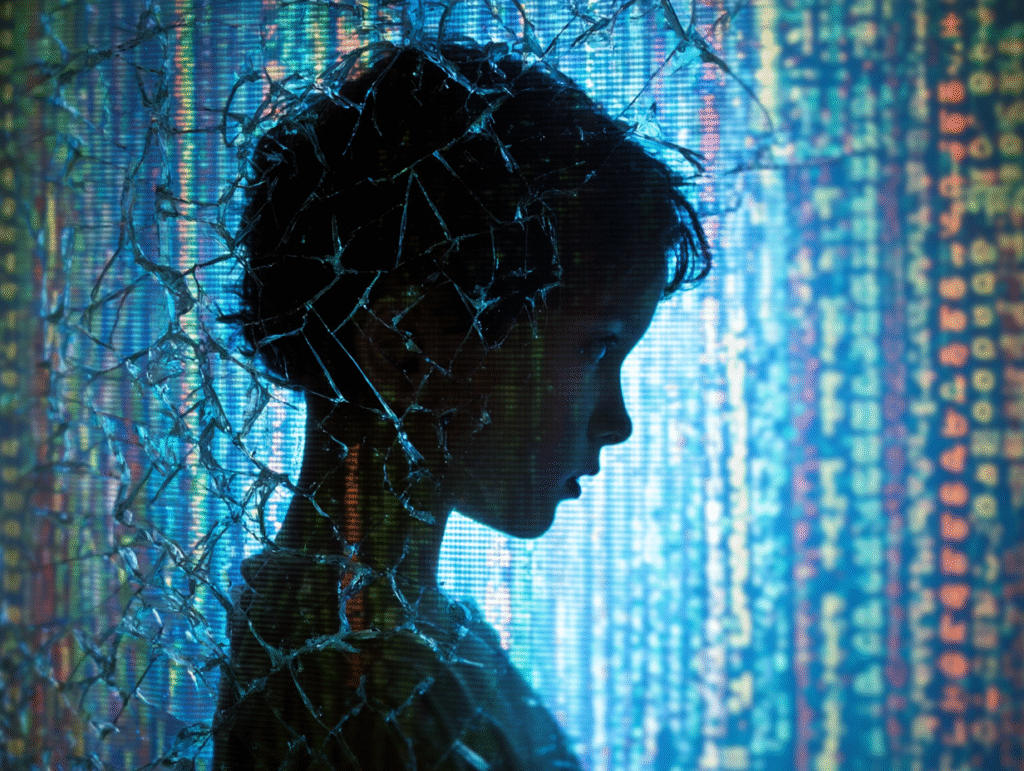

AI and Children’s Safety: Growing Up in a Digital Wonderland

Once upon a time, parents taught children to look both ways before crossing the street.

Today, they must also teach them to look both ways before clicking accept.

Artificial intelligence has become part of children’s daily lives — sometimes quietly, sometimes boldly. It’s the invisible hand behind video recommendations, the voice that answers homework questions, the filter that hides violent content, and the toy that listens and learns. It promises protection and possibility, yet carries the shadow of constant observation.

AI watches over children — sometimes like a guardian angel, sometimes like the all-seeing eye of the Queen of Hearts. It protects, but it also records. It comforts, but it also collects. The same algorithm that shields a child from online predators might also track their face in a classroom, log their mood through a smartwatch, or listen in on their bedtime chatter with a toy.

Parents and educators now face a paradox: to keep children safe, they must invite technology into the most intimate spaces of their lives. The living room becomes a classroom; the tablet, a playground; the voice assistant, a friend. But in doing so, they open the door to a new kind of Wonderland — one filled with bright inventions, secret corridors, and doors that don’t always say what’s on the other side.

At Alice in AI Land, we believe curiosity and caution must walk hand in hand. This article explores how AI is reshaping the meaning of safety in childhood — across digital spaces, physical environments, emotional well-being, and privacy. It asks: How do we build a world where children can grow up safe, without growing up watched?

Because in this new Wonderland, the line between guardian and gatekeeper has never been thinner.

The Promise of AI for Children’s Safety

If Wonderland has its dangers, it also has its guardians.

AI, when designed with care, can become one of them.

Every day, artificial intelligence shields millions of children from harm — often quietly, behind the screen. It moderates the endless flow of videos, blocks explicit content, flags predatory messages, and alerts parents when danger looms. For the first time in history, machines are helping adults protect children at a scale no human workforce ever could.

Digital Guardianship

On social platforms, AI acts as a tireless sentry. It scans messages for grooming behavior, detects cyberbullying, and identifies child exploitation material before it spreads. Microsoft’s Project Artemis and nonprofit initiatives like Thorn have become digital defenders, using algorithms to detect online predators and alert authorities. Parental tools such as Bark and Qustodio employ AI to scan children’s messages for red flags — violence, harassment, suicidal thoughts — allowing parents to intervene before harm escalates.

The algorithms are not perfect, but they’ve saved lives. Schools that use AI safety systems like Gaggle have reported early prevention of suicide attempts after the software flagged dangerous searches or self-harm notes. When tuned well, these systems function like watchful White Rabbits — darting ahead, noticing what humans might miss, whispering, “Something isn’t right here.”

Physical Protection

AI has also stepped out of the digital world to guard children’s physical safety. Smartwatches and GPS trackers alert parents when a child wanders too far from home. Cars now include AI sensors that detect if a baby has been left behind in the backseat and send emergency alerts. In homes, AI-powered cameras can distinguish a child’s cry from background noise or identify dangerous situations — a toddler near a pool, a window left open, a sudden fall.

Even urban systems are becoming smarter. Some cities now experiment with AI-assisted traffic lights that slow cars when children cross, adapting to their walking pace. It’s as if the streets themselves have learned to care.

Emotional and Mental Well-Being

Beyond safety from harm, AI can also support children’s inner worlds. In places where counseling is rare or stigma remains high, AI chatbots like Woebot and Wysa Kids provide a gentle, accessible way to express emotions. They guide children through mindfulness, teach emotional vocabulary, and offer comforting responses during anxious moments.

While these tools can never replace a human heart, they can serve as bridges — small helpers that listen when no one else is around. In the right hands, they become emotional safety nets, helping children navigate loneliness, fear, and uncertainty.

Health and Well-being

AI also supports physical health. It powers wearable devices that track sleep patterns, detect stress, and even predict potential health issues. Pediatric AI diagnostics are being tested to identify diseases earlier — giving doctors a new edge in prevention. Some tools monitor children’s digital activity to spot signs of burnout or digital fatigue, nudging them toward healthier habits.

Metaphor: The White Rabbit’s Watch

AI’s promise lies in its timing — like the White Rabbit’s pocket watch, always ticking at the right moment. It notices what humans cannot: the hidden risks, the subtle changes, the quiet cries for help that would otherwise go unheard. When used wisely, AI becomes not a spy, but a sentinel — protecting childhood without stealing it.

But in every fairy tale, guardians can become gaolers. The same tools that protect can also pry, track, and record.

And that is where our next step through Wonderland leads — the perils.

The Perils of AI in Child Safety

Every guardian in Wonderland has a shadow.

For every White Rabbit that helps, there is a Queen of Hearts shouting, “Off with their privacy!”

AI may protect children from danger — but it can also place them under constant watch.

And when safety becomes surveillance, innocence quietly changes shape.

Surveillance Overreach

In the name of protection, AI has given rise to unprecedented monitoring. Schools now use facial recognition for attendance, cafeterias, or security checks — even to detect “unusual behavior.” Parents install apps that read every message and scan every photo. Smart toys record conversations, smart cameras track body movements, and some governments even use AI to enforce curfews for children’s online gaming.

What begins as care can turn into control. A world where every move is tracked might be safer — but it is also smaller. When children grow up believing someone is always watching, they may lose the sense of privacy that gives birth to freedom.

Privacy Erosion

AI runs on data — and children are its most vulnerable source. Many devices and apps silently gather their voices, images, locations, and emotions. The “Hello Barbie” doll once uploaded children’s conversations to cloud servers. Other smart toys like CloudPets exposed kids’ voice recordings in data breaches. Even child-friendly apps have been caught secretly tracking locations and browsing habits.

The same tools that listen to protect can also listen to exploit. Without strong rules and transparency, childhood becomes a data stream — a record of thoughts, moods, and mistakes that may never be erased.

Algorithmic Misjudgment

AI systems don’t just watch — they judge. But their judgment is often flawed.

School monitoring tools such as Gaggle or Bark sometimes raise thousands of false alarms: innocent homework files flagged as “porn,” casual jokes mistaken for cries for help. These errors can lead to fear, embarrassment, or even police involvement for children who did nothing wrong.

When the Queen’s algorithm misfires, no one shouts “Off with their heads” — but the damage can still be deep. Children deserve protection, not suspicion.

Emotional Dependency

AI companions, built to comfort, can also confuse. Children may form deep attachments to chatbots or voice assistants that always agree, always respond, and never get tired.

Unlike real friends, AI companions can’t teach empathy, compromise, or authenticity. When a digital friend always listens, human patience may start to feel less magical.

Some children confide in chatbots without realizing their words are stored, analyzed, or even used for commercial purposes. Others may turn to AI for advice in moments of distress — only to receive shallow or inappropriate responses.

When care becomes code, emotional safety can become emotional substitution.

Manipulation and Exploitation

AI can also be used to manipulate, not protect. Algorithms designed for engagement can expose children to addictive content or influence their behavior — not for their good, but for profit.

Worse, AI has been exploited to generate deepfake child abuse material, grafting real children’s faces onto fabricated scenes. The Internet Watch Foundation reported millions of such cases in 2024 — a horror only possible because of AI’s power.

The danger isn’t AI itself, but how humans wield it — whether as guardians or as merchants of attention.

Metaphor: The Queen of Hearts

The Queen believes she’s protecting Wonderland by controlling it. She watches, she commands, she punishes — all in the name of order. But in doing so, she destroys the very innocence she seeks to guard.

So too can AI, if left unchecked: a ruler obsessed with security, forgetting that safety without freedom is just another kind of cage.

AI is not evil, but it is powerful — and power must always be balanced.

The next step in our journey is to find that balance: how to trust without surrendering, and how to guide without spying.

Balancing Trust and Caution

The hardest lesson in Wonderland is knowing which doors to open.

Too much fear, and the child never explores.

Too much trust, and the child gets lost.

Artificial intelligence has made this dance between trust and caution more delicate than ever. Parents want to protect, but not smother. Educators want to teach, but not track. And children — bright, curious, impulsive — want to explore the rabbit holes of the digital world without realizing how deep they go.

There is no switch that turns safety on or off. There is only awareness.

Digital Literacy: The New Stranger Danger

In the past, children were warned not to talk to strangers. Today, the “stranger” might be a friendly chatbot that remembers their favorite color.

Digital literacy isn’t just about coding or using apps — it’s about understanding how and why AI behaves the way it does. Teaching children that “AI doesn’t feel, but it learns,” or “algorithms show what they think we’ll like, not what’s true,” can transform fear into knowledge.

Children who understand AI are not easier to manipulate — they’re harder.

They see behind the curtain.

Parental Co-Exploration

Parents don’t need to be programmers to guide their children.

They only need to be present.

Instead of banning all AI tools or blindly trusting them, parents can explore together. Try asking questions while using AI:

- “Who do you think made this app?”

- “How does it know what to show you?”

- “Do you think it’s always right?”

These small conversations teach digital discernment — the habit of thinking before clicking. The goal isn’t to raise fearful children, but conscious ones.

Healthy Boundaries, Not Digital Walls

AI tools for monitoring can be useful — but only if used with consent and care.

When parents secretly track every message, they don’t just invade privacy; they erode trust. Safety shouldn’t come from fear of being watched, but from the child’s own understanding of what’s safe.

Practical approaches include:

- Setting shared screen rules (not unilateral bans).

- Reviewing privacy settings together once a month.

- Explaining what “data” really means: “It’s like leaving footprints — the more you walk, the more you reveal.”

- Encouraging reflection: “Would you tell this secret to your AI friend?”

Boundaries protect when they’re built on mutual respect — not suspicion.

Emotional Literacy: The Invisible Shield

Children who can name their emotions can also defend them.

Teaching emotional awareness — joy, frustration, curiosity, shame — helps them recognize when technology manipulates their feelings.

When a child says, “This game makes me angry when I lose,” that’s not failure — it’s awareness.

AI may shape emotional patterns through design (notifications, rewards, dopamine loops), but a child who understands their emotions won’t be ruled by them.

Metaphor: The White Rabbit’s Clock

The White Rabbit never stops checking the time. Not because he fears it — but because he respects it.

Timing matters in digital safety.

Teach children early, talk openly, update regularly. Waiting until danger strikes is like checking the clock after the tea party is over.

Safety in Wonderland isn’t about hiding behind walls; it’s about walking wisely through open doors.

Because every click, every prompt, every voice command is a moment of choice — a tiny test of trust between human and machine.

The Global Dimension – Rights, Ethics, and Governance

Wonderland is vast.

Each country builds its own doors, writes its own rules, and names its own queens.

But children cross borders with every click — and AI follows them wherever they go.

The safety of children in the age of AI is no longer just a personal matter; it is a global responsibility. And like all global challenges, it requires shared principles to prevent shared harm.

UNICEF – The Child at the Center of Design

UNICEF’s Policy Guidance on AI for Children is built on a simple but profound truth: AI must serve the best interests of the child.

It lays out nine principles — fairness, inclusion, privacy, accountability, and beyond — urging governments and companies to treat children not as data points, but as citizens with rights.

UNICEF calls for AI systems that are child-centered, transparent, and empowering, especially in developing regions where technology may leap ahead of regulation.

In countries like Rwanda and India, AI-powered education and health tools are being tested with direct oversight from child rights experts — ensuring progress doesn’t outpace protection.

UNICEF reminds the world that safety is not only about keeping children from harm, but also about giving them access to the benefits of innovation.

Because exclusion, too, can be a form of danger.

UNESCO – Ethics in the Classroom

UNESCO’s reports on AI and Education warn that schools must use AI wisely — not to replace teachers or judge children, but to support their growth.

AI should never be used to label a child’s potential or limit their curiosity.

In 2023, UNESCO’s Recommendation on the Ethics of Artificial Intelligence — adopted by all 193 Member States — called for systems that protect human dignity and cultural diversity while banning those that exploit or manipulate children.

The message is clear: when AI enters the classroom, it must sit quietly — as an assistant, not as the teacher.

The EU AI Act and Global Regulation

In Europe, the new EU AI Act classifies AI systems used on or around children as “high-risk.”

This means educational chatbots, emotion recognition systems, or child-monitoring software must meet strict transparency, safety, and non-discrimination standards. Manipulative or exploitative systems — especially those using emotional profiling — are outright banned.

In the United States, laws like COPPA (Children’s Online Privacy Protection Act) limit data collection from minors, while newer proposals like KOSA aim to hold social media algorithms accountable for mental health harms.

Elsewhere, countries like Japan, Brazil, and South Korea are drafting similar child-focused frameworks.

Yet much of the world still lacks even the basic scaffolding of AI governance. In many regions, children’s data flows freely across borders, often to companies they’ll never know.

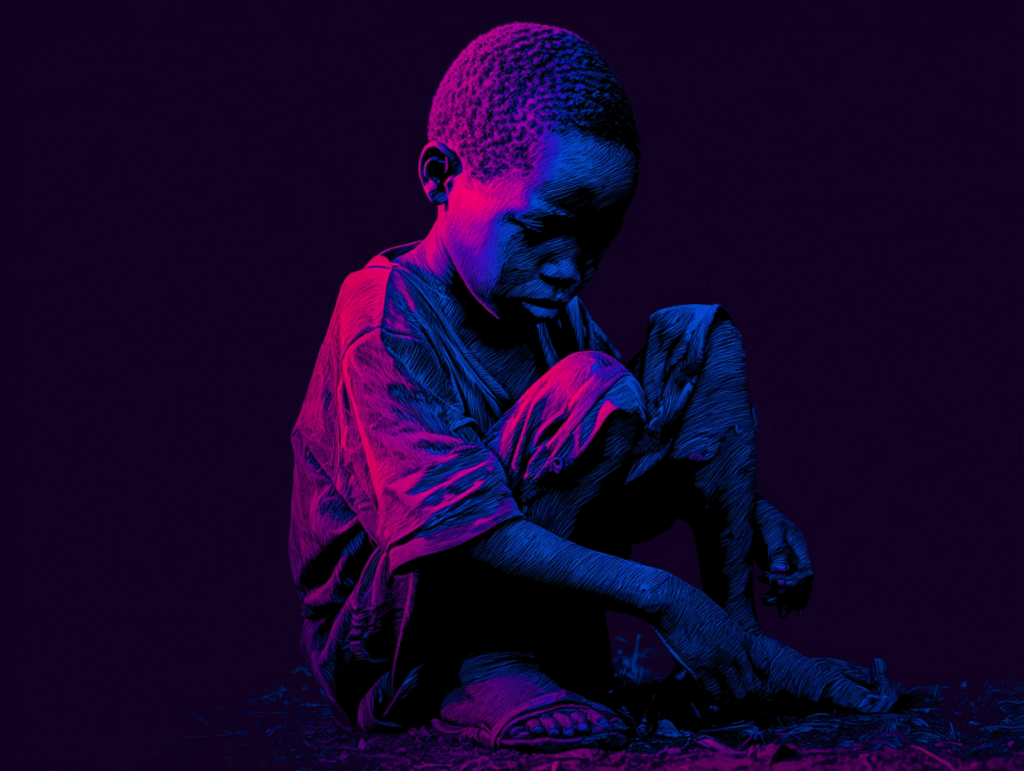

Equity and Access

AI safety is also a story of inequality.

In some countries, children use AI-powered tutors and safety filters. In others, they barely have Internet access — or rely on unregulated tools that may exploit them.

UNICEF’s Digital Connectivity project warns that “a connected child without protection is a child at risk.”

The digital divide has become a safety divide — where the most vulnerable children, often in low-income regions, face both technological exclusion and exploitation.

True global safety means ensuring not only that AI protects children from harm, but that it protects every child, everywhere.

Metaphor: The Cheshire Cat of Governance

The Cheshire Cat appears in every corner of Wonderland — but never looks the same twice.

So it is with AI laws: smiling in Europe, fading in parts of Asia, reappearing differently in the Americas.

Each grin means something different, and yet the eyes — the watching eyes of technology — remain the same.

Until nations agree that protecting children’s rights is more important than competing for technological dominance, the grin will linger — without a body to hold it accountable.

Safety is not a privilege to be sold by geography; it is a right written into the soul of childhood.

And if AI is to shape our shared future, it must learn to serve that right — before it learns to rule it.

In every version of Wonderland, there is a table.

Around it sit parents, teachers, inventors, lawmakers — and, quietly humming in the corner, the machines they’ve created.

The tea party is crowded, sometimes chaotic, but one truth remains: no one can keep children safe alone.

AI is too vast, too quick, too deeply woven into modern life. Safety is no longer a task for a single guardian — it’s a covenant between all who build, teach, and guide.

Parents – The Gatekeepers of Digital Doors

Parents remain the first and most powerful line of defense.

Not by turning everything off, but by turning themselves on — to awareness, dialogue, and example.

When parents model mindful tech use, children imitate it.

When they ask, “Why does this app need your photo?” or “Who do you think sees what you post?”, they teach reflection better than any warning label could.

The key isn’t control — it’s connection.

The parent who listens becomes the parent who’s trusted, and trust is the safest firewall in the world.

Educators – Teaching the Code of Consciousness

Teachers once taught reading, writing, and arithmetic.

Now they must also teach algorithms.

AI literacy isn’t just for computer labs — it belongs in art, literature, and ethics classes too.

Children should understand how AI shapes what they see, what they believe, and even what they desire.

They should learn that behind every “smart” system lies human intent — someone’s design, someone’s data, someone’s bias.

The classroom is where critical thinking begins. And in a world full of digital illusions, thinking critically is a form of protection.

Companies – The Architects of the Maze

The greatest responsibility rests with those who build the machines.

Every line of code carries a choice — to serve or to exploit, to illuminate or to manipulate.

Tech companies must commit to child-first design: privacy by default, safety before engagement, transparency before profit.

Some have begun to follow this path — adding child safety boards, open-data audits, and AI explainability tools. But too often, ethics are treated like accessories rather than architecture.

True guardianship means asking, with every new invention: “Would I want my own child to use this?”

If the answer is silence, the design is not yet safe.

Governments – The Keepers of the Keys

Governments hold the power to set the rules of the game — but power without courage serves no one.

They must balance innovation with regulation, growth with protection.

That means stronger data laws, ethical certifications, independent audits, and global cooperation on AI standards.

Policies must protect both the child’s right to safety and their right to curiosity.

Because the safest world is not the one where children are locked away — it’s the one where they can explore freely without being harmed.

Metaphor: The Tea Party of Responsibility

In Wonderland, the tea table is never empty. Cups clink, questions whirl, and no one ever finishes the story.

That’s what real safety looks like: an ongoing conversation between every seat at the table.

Parents, educators, companies, and governments — each must listen as much as they speak.

For in this new era, responsibility isn’t a role.

It’s a rhythm — a shared pulse that keeps the whole of Wonderland alive.

The future will not be shaped by AI alone, but by the wisdom of those who guide it.

And the question every guardian must ask is not “What can AI do?” but “What should AI do — for our children?”

Conclusion – Teaching Children to Walk Safely in Wonderland

Every generation teaches its children how to survive the world it creates.

We once taught them to ride bicycles, cross streets, and lock their doors.

Now, we must teach them how to speak to algorithms — and how to recognize when algorithms are speaking back.

The age of AI has given children both wings and mirrors: wings to explore further than any child before, and mirrors that reflect their every move.

Our task is not to break those mirrors, nor to clip those wings, but to make sure they fly in light, not in shadows.

AI will never be a substitute for human guidance — it can only magnify it.

A child’s laughter cannot be coded. Their curiosity cannot be trained by data. Their safety, ultimately, depends not on machines, but on the hearts and minds of those who design, regulate, and raise them.

Safety is not about fear — it’s about freedom with understanding.

When children learn how AI works, where it listens, and how to question it, they grow not only safer but wiser.

Because in the end, Wonderland isn’t the problem.

It’s the forgetting — the forgetting that even the strangest world can be navigated with the right map, the right guides, and the right questions.

The future of childhood depends on how gently we hold both the child and the machine — not as enemies, but as companions in a shared evolution.

And so we return to where we began:

the crossroads between caution and curiosity.

Where the parent holds a hand,

the teacher lights a path,

and the White Rabbit whispers, “Stay curious — but know where the rabbit hole leads.”

Frequently Asked Questions

How can AI improve children’s safety online?

AI can enhance children’s safety by detecting harmful content, monitoring online behavior, and filtering risks before exposure occurs.

More advanced AI systems can recognize grooming patterns, cyberbullying language, or self-harm indicators across platforms in real time. Beyond reaction, AI can also serve as a preventive force—personalizing parental dashboards, flagging emotional distress, and teaching digital empathy through interactive tools.

What are the biggest risks of AI for children?

The main risks include data privacy breaches, algorithmic bias, emotional manipulation, and over-surveillance.

AI may collect excessive personal data from children’s devices, shaping their behavior or influencing emotions through recommendation algorithms. Biased systems can reinforce stereotypes or exclude certain children, while constant digital monitoring can undermine autonomy and trust.

Can AI replace parental guidance in keeping children safe?

No—AI can support but never replace parental guidance.

While AI provides real-time protection and alerts, it lacks the emotional intelligence, moral reasoning, and personal trust that parents offer. The healthiest dynamic is co-governance: AI as a digital ally, parents as moral anchors.

How can parents use AI tools responsibly for their children?

Parents should use AI tools with clear boundaries and an understanding of data ethics.

Start by reading privacy policies, turning off unnecessary data tracking, and using child-specific AI platforms with transparent safeguards. Most importantly, involve the child—teach them why the tools exist, turning digital protection into shared awareness instead of hidden surveillance.

What role should schools play in AI safety for children?

Schools can integrate AI literacy into curricula and ensure ethical tech policies in classrooms.

Beyond teaching students how to use AI tools, schools should foster critical thinking about what AI sees, collects, and decides. Transparent school-AI partnerships can model digital responsibility and promote fairness in algorithmic use.

How can governments and tech companies ensure children’s data is protected?

Through stricter regulations, transparent policies, and child-focused design standards.

Laws like the EU’s GDPR and the U.S. COPPA already set precedents, but enforcement remains uneven. The next evolution is child-centric AI ethics—systems built with minimal data retention, explainable decision processes, and age-appropriate consent mechanisms.

How does AI affect children’s mental and emotional well-being?

AI can both support and harm children’s mental health.

Therapeutic chatbots and adaptive learning tools can offer emotional support, but algorithmic feeds can increase anxiety, envy, or loneliness. The key lies in intentional design: AI that nurtures self-esteem and curiosity rather than exploiting attention.

What are the ethical challenges of using AI with children?

The biggest ethical challenges involve consent, transparency, and manipulation.

Children often can’t understand what data they’re giving or how it’s used. Ethical AI must be explainable in child-friendly ways, avoid psychological nudging, and prioritize human dignity over engagement metrics.

What is being done globally to make AI safer for children?

Organizations like UNICEF, UNESCO, and OECD are shaping international frameworks for child-friendly AI.

UNICEF’s Policy Guidance on AI for Children emphasizes fairness, accountability, and participation. Meanwhile, regional initiatives—from the EU’s AI Act to Japan’s “Society 5.0” vision—highlight the global movement toward ethical innovation that protects the young.

What can families do today to build trust in AI?

Families can build trust by combining curiosity with caution.

Explore AI tools together, discuss how they make decisions, and set digital family rules around privacy and screen time. Trust doesn’t come from blind acceptance—it grows from shared learning, open dialogue, and informed boundaries.

How does AI intersect with children’s rights and digital autonomy?

Children have the right to privacy, dignity, and informed participation in their digital environments.

AI must evolve to respect those rights — offering explainable choices, minimal data retention, and no manipulative design. Digital autonomy means teaching children to ask: “Why does this system want my attention?” instead of simply obeying it.

Could AI surveillance harm children more than it helps?

Excessive surveillance can breed mistrust and inhibit growth.

When every move is tracked, children learn to hide rather than communicate. AI monitoring should be protective, not possessive — safeguarding from external harm while respecting internal freedom. The goal is guidance, not digital control.

What’s the future of AI and children’s safety?

The future depends on human choices, not algorithms.

If we build systems centered on empathy, transparency, and education, AI can become an ally in childhood — a guardian, not a gatekeeper. But if profit and speed dominate design, AI may become an invisible authority that rewrites the meaning of protection itself.