AI for Children: Promise and Peril

Every generation grows up with a new tool that reshapes how children see the world. For our grandparents, it might have been television. For our parents, the first wave of personal computers. For today’s children, that tool is artificial intelligence.

Some kids already talk to AI assistants before they can spell their own names. By the time they turn twelve, many will have written stories with a chatbot, drawn pictures with a generator, or had their screen time quietly managed by an algorithm. AI has slipped into children’s lives not with a bang, but with a whisper.

That whisper matters. AI can open extraordinary possibilities: a struggling student might finally understand fractions with the help of a patient digital tutor; a child with dyslexia might find their voice through speech-to-text tools; a lonely teen might find a safe space to talk when no one else is around.

But the same technology can also mislead, overexpose, and manipulate. Algorithms don’t just guide learning; they also decide what videos autoplay, what ads pop up, and what information feels “true.” AI holds both promise and peril, often in the very same hand.

This is where Alice in AI Land begins. Our project isn’t about hype or fearmongering. It’s about asking: How do we make sure children grow up with AI in ways that nurture, not diminish, their humanity? Like Alice stepping into Wonderland, today’s kids wander into a landscape both wondrous and bewildering. Our role is not to keep them from entering—but to guide them safely through.

The Individual Dimension – How AI Touches a Child’s Life

AI doesn’t arrive in a child’s life as one single invention. It shows up in dozens of little ways: a homework helper, a game character that “learns” your moves, a filter that changes a photo, a voice that reads bedtime stories. To understand its impact, we need to look at how AI touches children day to day — in classrooms, in play, and in the quiet corners of their inner world.

Education & Learning

Imagine a tutor who never loses patience, who adapts to a child’s pace, and who can explain the same math problem ten different ways until it finally clicks. That’s the promise of AI-driven learning. These tools can lift struggling students, challenge advanced ones, and offer individualized attention in ways classrooms often can’t.

But there’s a catch: if every answer is just one prompt away, what happens to the struggle that builds resilience? Children may lean too heavily on AI, letting it think for them rather than learning how to think themselves. Just as calculators changed math education, AI will change how kids write, research, and reason — and it’s up to adults to make sure children still flex their own mental muscles.

Creativity & Play

Children’s imaginations are boundless — and AI can act like an amplifier. With a few words, a child can conjure a dragon illustration, compose a melody, or co-write a bedtime story. For kids who feel “not artistic,” these tools can unlock doors to self-expression.

Yet, play isn’t just about producing a polished product. It’s about discovery, messiness, and invention. If AI always fills in the gaps, children might start to expect perfect results instead of enjoying the wobble of learning. The risk isn’t that AI kills creativity — it’s that it might make play too efficient, too polished, leaving less space for imagination’s happy accidents.

Safety & Well-being

AI already acts as a guardian in digital spaces. Filters scan for harmful content; health apps track sleep and mood; some therapy bots even listen when a child feels anxious and alone. For many families, these tools are lifelines.

But safety can flip into surveillance. The same technology that blocks explicit videos can also gather a child’s viewing habits, location, or private words — data that may be used for advertising or, worse, fall into the wrong hands. And while a chatbot might comfort a lonely teen at 2 a.m., it cannot replace the warmth of a human relationship. Children may come to trust AI voices too much, blurring the line between genuine care and programmed responses.

In short: AI in a child’s personal world is both tutor and tempter, companion and collector. It can help them thrive, but it can also quietly reshape how they learn, play, and feel safe. Like Alice wandering deeper into Wonderland, children encounter wonders that delight and puzzles that disorient. Our role is to notice both.

The Global Dimension – Children in a Changing World

AI doesn’t just shape a child’s private world of school, games, and screens. It also reshapes the world they inherit — the opportunities they’ll have, the dangers they’ll face, and the fairness of the systems around them. Seen globally, AI can be both a ladder and a wall.

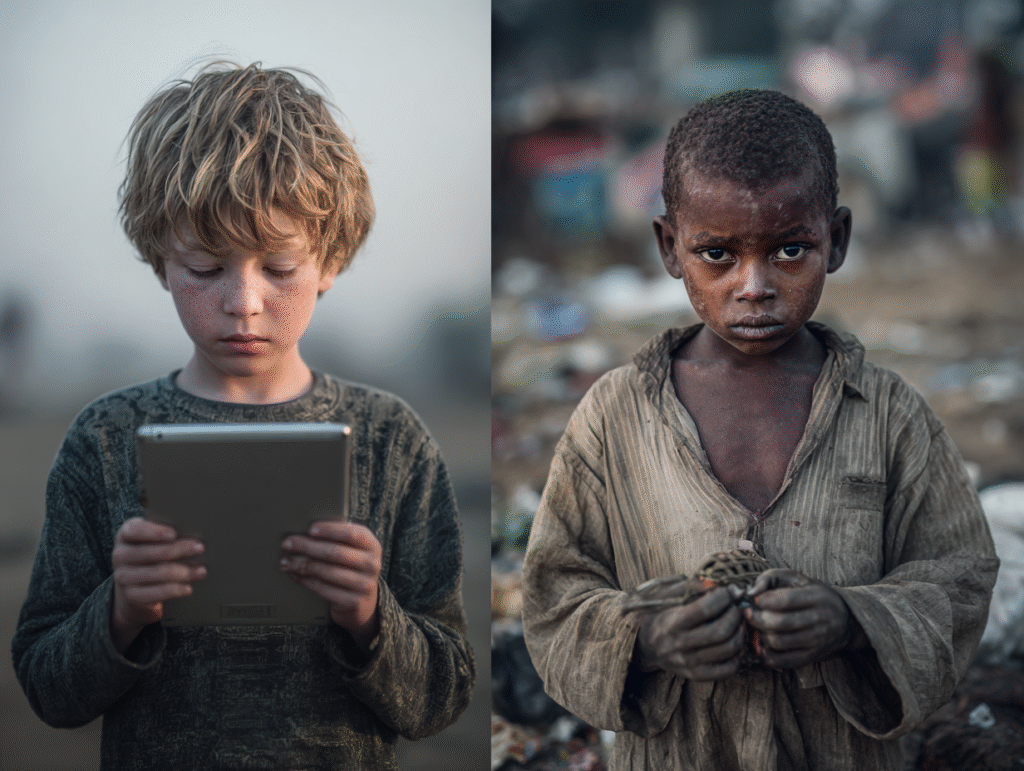

Equity & Access

For some children, AI could be the bridge that brings education within reach. Imagine a village where there are too few teachers, but an offline AI tutor on a tablet can guide a child through math or reading. Or a child with disabilities who gains access to speech recognition and translation that levels the playing field.

Yet this promise is unevenly distributed. Two-thirds of the world’s school-age children still have no internet at home. In wealthy households, AI tutors might be just another app. In poorer households, children may not even have electricity, let alone connectivity. Unless access gaps are closed, AI risks widening inequalities — accelerating those already ahead, and leaving the rest even further behind.

Health & Safety

AI can serve as a quiet guardian for children everywhere. In hospitals, algorithms can help detect diseases earlier than doctors’ eyes alone. In humanitarian work, predictive models can spot hunger crises or outbreaks before they spiral. Relief agencies are beginning to use AI to track displaced families, making sure aid reaches children fleeing war or disaster.

But these same technologies raise new questions. When children’s health data is collected, who safeguards it? When surveillance drones fly over conflict zones, who ensures they protect rather than expose? AI can help heal, but in careless hands, it can also harm.

Conflict & Exploitation

In the harshest parts of the world, AI is already a double-edged sword. Autonomous systems in warfare could turn children into collateral damage if machines fail to tell combatants from civilians. Online, generative AI makes it easier to create fake or exploitative content, putting children at risk of manipulation and abuse.

At the same time, AI can be a tool for protection: scanning the web for abusive material, or guiding humanitarian drones to clear mines before a child steps near them. Whether AI in global crises becomes a protector or a predator depends less on the code itself, and more on the choices of those who wield it.

In short: AI is not just entering children’s homes — it is reshaping the very stage of childhood worldwide. For some, it’s a chance to leap ahead; for others, a new barrier to climb. Like Alice stepping into strange lands, children everywhere must navigate terrain transformed by forces far beyond their control.

The Wonderland Lens – Why Alice?

Why call this project Alice in AI Land? Because for children, stepping into a world shaped by artificial intelligence feels a lot like tumbling down the rabbit hole. Everything looks familiar, yet slightly strange. Objects behave in unexpected ways. Rules bend. Power shifts. And hidden dangers lurk behind whimsy.

Through the Wonderland lens, AI’s presence in children’s lives comes alive in metaphor:

- The White Rabbit = Algorithms. Always running ahead, beckoning children to follow. Recommendation engines on YouTube or TikTok pull them deeper and deeper into tunnels of content. The Rabbit is neither friend nor foe — but if children follow without guidance, they may end up far from where they meant to be.

- The Queen of Hearts = Unchecked Power. Loud, commanding, unpredictable. Platforms and companies that prioritize engagement or profit over children’s welfare act like this Queen — quick to shout “Off with their heads!” without caring who gets hurt. Without oversight, AI can turn from playful to tyrannical in an instant.

- The Cheshire Cat = Personalization. Smiling, fading, reappearing — personalization seems helpful, even magical, as content bends to a child’s tastes. But just as the Cat’s grin could be unsettling, personalization can manipulate moods, narrow horizons, or keep children looping through the same ideas until they lose sight of the bigger world.

And perhaps most importantly: Alice = every child. Innocent, curious, resilient — but also vulnerable. She doesn’t walk through Wonderland alone. She meets guides, tricksters, and rulers along the way. Which raises the essential questions: Who protects Alice? Who guides her? Who writes the rules of the game?

By looking at AI through this story lens, we remind ourselves that technology is never neutral. Like Wonderland, it can be delightful, absurd, or dangerous depending on who sets the stage and who watches over the journey.

Note: Alice in AI Land is a live project. These metaphors — the Rabbit, the Queen, the Cat — are evolving as we explore them. We invite you to grow this Wonderland with us.

Responsibility – Who Holds the Keys?

In Wonderland, Alice was never alone. She met guides, tricksters, and rulers — some who helped, some who misled. In our world, the question of who guides children through AI is not a riddle but a responsibility. The keys are in many hands.

Parents and Families

At home, parents are the first gatekeepers. They decide when children first meet AI — whether through a toy that “talks back,” a homework assistant, or a streaming app that recommends the next video. Parents set the tone: is AI treated as a teacher, a tool, a toy, or a babysitter? Simple family practices, like co-using AI with kids, setting time windows, and talking openly about what the machine does (and doesn’t do), can make the difference between empowerment and dependence.

Educators

In schools, teachers don’t just teach math or history anymore — they also shape digital literacy. Introducing AI responsibly means showing students how to use it critically: to check sources, to ask where an answer comes from, to question bias. Just as schools once taught children to read books critically, now they must teach them to “read” AI with the same care. Without this, students risk growing up fluent in using AI but illiterate in understanding it.

Companies

Tech companies build the tools children touch every day. Their responsibility is to design with children in mind — not just as users, but as developing humans with rights and vulnerabilities. That means creating kid-safe modes, minimizing data collection, and being transparent about how algorithms work. Too often, profit and engagement win over protection. But designing ethically for children isn’t optional; it’s the difference between building a playground and setting a trap.

Governments & Society

No family or teacher can shoulder the burden alone. Governments write the rules of the game: laws on data protection, safeguards against exploitation, requirements for age-appropriate design. International organizations like UNICEF and UNESCO have already called for child-centered AI principles. Societies, too, must decide: do we see children as citizens with rights in a digital future, or as just another market to be mined?

In short: Guiding Alice through AI Land is a shared task. Parents light the path at home, teachers guide in classrooms, companies shape the landscape, and governments set the boundaries of the game. When any one of these hands lets go, the child walks alone — and Wonderland can quickly turn from curious to cruel.

Conclusion

The question is no longer if AI will touch children’s lives — it already has. It is in classrooms, on screens, in toys, and even in hospitals. The real question is how it will shape childhood, and who will shape it alongside them.

We’ve seen that AI can be a patient tutor, a spark for creativity, a quiet guardian of safety. But it can also collect data silently, feed endless distractions, or mislead with convincing falsehoods. Globally, it may widen divides as easily as it bridges them. In this sense, AI is not one story, but two unfolding at the same time: a story of promise, and a story of peril.

That is why Alice in AI Land exists. We are here to explore this strange new territory with honesty, curiosity, and responsibility. Like Alice stepping into Wonderland, today’s children enter a landscape full of marvels and dangers. Our task is not to shut the door behind them, but to make sure the path ahead is lit.

This article is only a beginning. In the weeks ahead, we’ll dive deeper — into AI and education, AI and creativity, AI and safety, and the ethics that bind them all. Each piece will expand the map, so parents, teachers, and society at large can guide children through this new world with care.

The question isn’t whether children will grow up with AI — it’s whether they will grow up well with AI.

And so, Alice in AI Land begins here.

Frequently Asked Questions

What are the benefits of AI for children?

AI can help children learn faster, create more freely, and stay safer online. It offers personalized tutors, creative tools for art and storytelling, and early health or safety alerts.

When used responsibly, AI can expand opportunities for children to explore their interests, develop skills at their own pace, and access support they might not otherwise receive.

What risks does AI pose for children?

The biggest risks are over-reliance, privacy violations, and manipulation through biased or harmful content. AI can also widen inequality if access is limited to wealthier families or regions.

Children may lose essential skills if AI replaces too much of their learning, or they may be exposed to unsafe experiences if parents and educators are not involved.

How can AI support children’s education?

AI can adapt lessons to each child’s learning style, provide instant feedback, and offer tools that make learning more interactive. It can also translate across languages and support children with disabilities.

For example, an AI tutor might slow down for a child struggling in math while pushing another forward into new challenges. This flexibility can help children gain confidence and keep learning engaging.

Can AI improve children’s creativity and play?

Yes, AI can act as a creative partner for children, helping them invent stories, design characters, or compose music. Used wisely, it can inspire children to think beyond traditional boundaries.

However, it works best when AI supplements imagination rather than replaces it. Parents and teachers should encourage children to see AI as a co-creator, not the creator.

What role does AI play in children’s safety and well-being?

AI can filter harmful content, monitor for cyberbullying, and even support mental health through chatbots or well-being apps. It can also help detect health issues earlier.

At the same time, safety cannot be left to AI alone. Parents and educators must supervise, set boundaries, and understand how these tools handle children’s data.

Does AI increase inequality among children?

Yes, if not managed carefully, AI can widen the gap between children with access to technology and those without. Wealthier families and schools may gain the most from personalized AI, while others fall further behind.

Global initiatives by UNICEF and UNESCO highlight this divide, stressing the need for infrastructure, affordability, and fair design so every child has a chance to benefit.

Who should be responsible for children’s use of AI?

Responsibility lies with parents, educators, companies, and governments. Parents guide AI use at home, teachers help children navigate it in classrooms, companies must design safe and fair tools, and governments provide rules and protections.

When all groups work together, AI can become a safe and enriching part of childhood. When they fail, children carry the risks.

Can AI replace parents or teachers in raising or educating children?

No, AI cannot replace parents or teachers — it can only support them. AI can deliver information, but it cannot nurture empathy, model values, or provide human connection.

Children need adults to help them question, interpret, and balance AI’s influence. Education and parenting are human-centered roles where technology must remain a tool, not a substitute.

What does “Alice in AI Land” mean for children?

It’s a metaphor for children stepping into a strange new world of AI. The White Rabbit represents algorithms leading deeper into technology, the Queen of Hearts represents unchecked power, and the Cheshire Cat represents bias and manipulation.

By using this metaphor, the project helps parents, teachers, and policymakers think critically about how to guide children safely through AI’s wonder and risks.