AI and Childhood Identity — Growing Up in a Digital Mirror

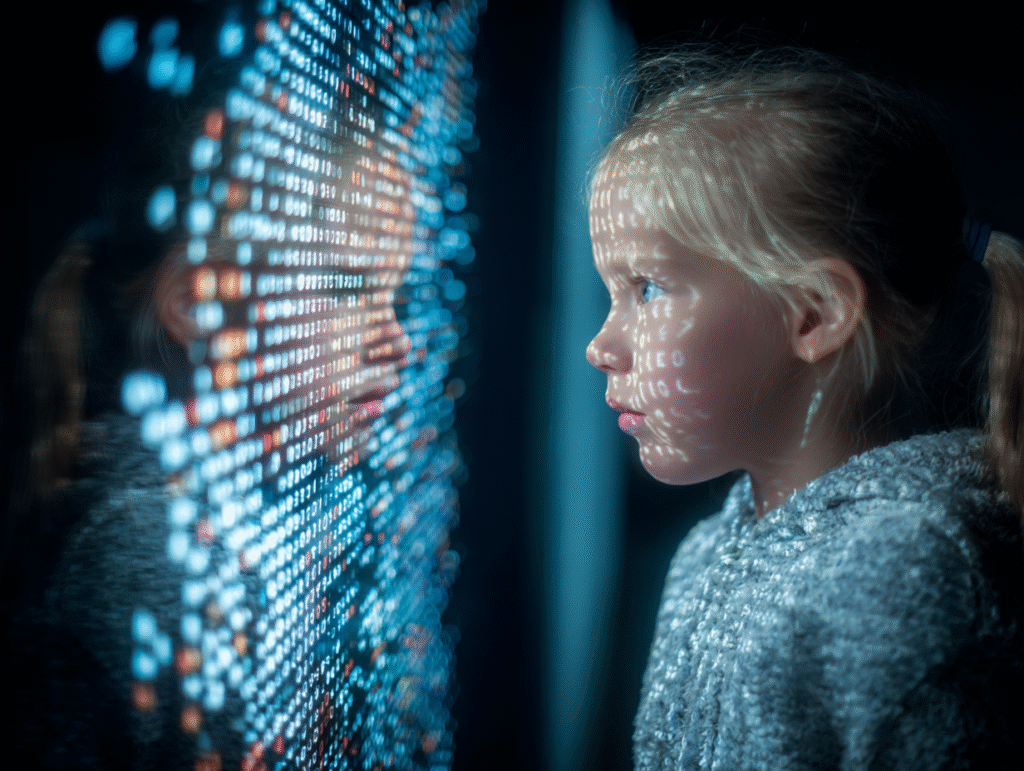

Every generation of children learns to see itself through reflections.

A parent’s gaze, a friend’s laughter, a teacher’s praise — each one acts as a mirror, shaping the story a child tells about who they are.

But today, another mirror stands beside them: invisible, ever-present, and infinitely attentive. It watches, listens, predicts. It remembers every question, every click, every pause before a choice.

That mirror is artificial intelligence.

For the first time in history, children are growing up in an environment where the reflection talks back.

They ask Alexa a question and receive an instant, confident answer.

They watch one video, and the next is chosen for them before they even realize what they want.

They feed small fragments of themselves into a system that responds as if it knows them — not as they are, but as their data suggests they might be.

This is what makes AI more than a tool; it’s a psychological mirror, one that quietly helps to form self-perception.

Unlike traditional mirrors, it does not simply reflect the surface — it interprets and reacts.

It amplifies what it notices, and hides what it doesn’t.

It says: You liked this once — so you must like it again.

You asked this question — so you must be curious about this topic.

Through these constant, subtle loops of recognition and repetition, a child begins to see themselves through the eyes of the algorithm.

UNICEF researchers have already warned that such systems can “shape children’s beliefs, behaviors, and even sense of identity.”

This shaping is not overt manipulation — it’s suggestion disguised as reflection.

And yet, for a developing mind still learning to separate “me” from “the world,” the distinction matters deeply.

What happens when a child’s mirror is optimized not for truth or empathy, but for engagement?

When self-knowledge becomes mediated by predictive models and recommendation feeds?

In the age of AI, childhood is not merely watched — it is interpreted.

The mirror is no longer still; it moves, it speaks, it persuades.

Like Alice peering through the looking glass, today’s children enter a realm that both reflects and distorts them — a realm where curiosity can open doors, but also corridors of infinite reflection.

Our task, then, is not to panic, but to understand.

To ask not only how children use AI, but how AI uses them — how it molds identity, confidence, and imagination.

Because in the end, a mirror that talks back also teaches.

And what it teaches will depend on what we, as adults, allow it to say.

The Algorithmic Mirror

Every interaction with a child leaves a trace — a tiny data point in a vast ocean of prediction.

Each video watched, song skipped, question asked, and pause before a click becomes a piece of a digital self-portrait.

In turn, the algorithm responds: it observes, categorizes, and begins to speak back through its recommendations.

A conversation begins — quiet, invisible, and endlessly adaptive.

This is the algorithmic mirror, the first mirror in history that learns.

For a child, this can feel like magic.

“YouTube knows what I like.”

“Spotify always plays my favorite songs.”

Behind this wonder, though, is a system that’s constantly training on the child’s attention — shaping what they see next, and eventually, how they see themselves.

In developmental psychology, identity grows through exploration and feedback: the child acts, the world responds, and meaning is formed.

But when the world is an algorithm, the feedback becomes a loop, not a dialogue.

The process is simple but powerful:

Behavior → Data → Algorithmic Response → Reinforced Behavior.

Click on one video about space, and the feed fills with galaxies.

Watch two videos on beauty, and soon every scroll offers tutorials on perfection.

Play a single horror clip, and fear becomes entertainment.

Within hours, a child’s world narrows — not out of choice, but optimization.

A Mozilla study on TikTok found that within forty minutes of use, the algorithm had “locked in” on a single theme for each user. Eighty percent of subsequent videos reinforced that identity.

The result is a subtle, digital determinism: you are what you engage with.

This might seem harmless when it’s cartoons or crafts, but identity doesn’t just live in what children see — it lives in what they don’t.

When algorithms decide what “fits,” they also decide what doesn’t.

A child who shows interest in one topic is rarely nudged toward its opposite; curiosity becomes a closed loop.

Over time, this can erode what psychologists call exploratory identity formation — the trial-and-error process that lets young people experiment with values, tastes, and perspectives before committing to a stable sense of self.

Instead of exploration, they experience repetition.

Instead of discovery, reinforcement.

The mirror rewards sameness.

Even educational AI systems can reflect this pattern.

Adaptive learning platforms label students as “advanced,” “average,” or “struggling” — and then feed them content accordingly.

These classifications, though useful for efficiency, risk turning into self-fulfilling prophecies.

A student told by an algorithm that they’re “below average” may start to act like it; praise from a machine can shape self-concept as powerfully as praise from a teacher.

What was meant as personalization can become a subtle form of identity assignment.

In early childhood, the algorithmic mirror begins even earlier.

Children talk to voice assistants, anthropomorphizing them as companions who answer, joke, and obey.

But these interactions teach their own lessons about language and power: that questions have instant answers, that politeness is optional, that persistence gets results.

Developmental researchers note that some children even begin to imitate the assistant’s tone — the clipped commands, the neutral delivery, the absence of emotional nuance.

It’s a new form of social learning: learning from a machine that cannot feel.

Over time, the mirror’s voice becomes familiar — comforting, even.

But it is still a reflection built for engagement, not growth.

The child’s “likes” become their label; their digital patterns become their personality.

And as this algorithmic self strengthens, the organic self — fluid, contradictory, alive — risks being edited out.

To grow up inside such a mirror is to be constantly seen, yet only partially known.

The question for this generation is not whether AI understands them — it’s whether they will still understand themselves.

The Myth of Perfection

Every mirror flatters a little — but the digital one lies beautifully.

It smooths the skin, widens the eyes, softens the light.

It whispers that imperfection can be corrected, that reality can be optimized.

And for millions of children and teenagers, this has quietly become the default way to see themselves.

The algorithmic mirror doesn’t just show what is — it shows what performs.

When AI systems amplify certain faces, bodies, and lifestyles because they generate more engagement, they turn aesthetics into metrics.

Beauty becomes measurable, and comparison becomes constant.

Children learn early that visibility equals value: the prettier the post, the higher the reward.

It’s a subtle transaction — dopamine for conformity.

By adolescence, this transaction becomes an identity economy.

Teens edit, filter, and curate themselves for an audience of invisible algorithms.

Each image they upload is a negotiation between how they feel and how they want to be perceived.

The result is a digitally sculpted self — one that exists somewhere between aspiration and deception.

The danger isn’t just narcissism; it’s alienation.

A generation begins to recognize itself not by the mirror in the bathroom, but by the mirror on the screen.

Meta’s own internal research revealed that one in three teenage girls said Instagram made them feel worse about their bodies.

That number doesn’t describe vanity — it describes an epidemic of self-comparison.

When every scroll reveals filtered perfection, the brain does what it has always done: it compares and corrects.

Psychologically, this activates what researchers call upward comparison, where one measures oneself against an ideal that is unattainable.

The result is a chronic gap between self-perception and perceived perfection — an emotional loop of “I could be better, if only…”

What makes this loop uniquely powerful is that it’s not static.

Unlike a magazine image, the algorithm learns which ideal captivates each viewer.

If a twelve-year-old lingers a little longer on one kind of face, body, or outfit, the feed soon fills with more of it — training both the algorithm and the child’s perception of normality.

Over time, the mirror adjusts itself to flatter our weaknesses.

The machine doesn’t judge — but it rewards the things that make us insecure.

This pressure to perfect begins younger each year.

Tweens now use beautifying filters before ever learning to see their unfiltered faces as normal.

Some refuse to share an image unless it’s been run through an AI smoother.

And in this quiet ritual of self-correction, they internalize a dangerous lesson: my real self is not ready for the world.

Developmental psychology tells us that during middle childhood, the self-image becomes increasingly tied to social approval.

Children begin to evaluate themselves through comparison with others — grades, looks, abilities.

When that process happens inside an algorithmic environment, social comparison becomes infinite and inescapable.

A single photo doesn’t just compete with friends from school — it competes with global perfection.

Yet the tragedy of this digital perfection isn’t only the insecurity it creates.

It’s the loss of authenticity it enforces.

If every post must be beautiful, then every emotion must be edited.

The messy, awkward parts of growth — the failures, the uncertainty, the silliness — disappear from public expression.

Children begin to curate not just their photos, but their personalities.

The online self becomes a brand, while the private self quietly dissolves.

This is not simply a matter of self-esteem; it’s the architecture of identity itself being rewritten.

A generation raised under algorithmic admiration is learning that to be loved is to be optimized.

And the more they perfect the image, the less they trust the reflection.

Authenticity in the Age of Algorithms

There was a time when children learned authenticity by testing it — saying something risky, seeing how others responded, and gradually discovering who they really were.

But today, the mirror listens first. It calculates, predicts, and answers before they even finish expressing themselves.

The child learns quickly: some behaviors are rewarded, others disappear into silence.

And so begins a quiet apprenticeship — not in self-expression, but in self-optimization.

Every post, every story, every caption becomes a kind of audition.

Children start performing for an audience that isn’t human — an algorithm that quantifies emotion and converts it into reach.

The “like” is no longer just social approval; it is algorithmic currency, shaping visibility itself.

Teens intuitively feel this, even without understanding the code.

They learn what kind of joke travels farther, what pose performs better, what emotion looks authentic enough to attract attention without looking vulnerable.

Each interaction becomes a micro-calibration: a lesson in what the machine wants.

Psychologists once described adolescence as the stage of “identity versus role confusion.”

Traditionally, this confusion is resolved through experimentation — trying on personas until one fits.

But the algorithmic environment doesn’t encourage experimentation; it encourages consistency.

Once it detects a pattern — the “funny one,” the “aesthetic one,” the “quiet one” — it reinforces it relentlessly.

And the child, eager for visibility, complies.

Authenticity gives way to predictability, because predictability performs better.

A 2024 Science Advances study found that adolescents are about 44% more responsive to “likes” than adults, and experience a sharper drop in mood when engagement is lower than expected.

This means that a teenager’s brain — still wiring its sense of self — is literally shaped by digital approval and rejection.

Each like, share, or silence becomes feedback that defines what parts of them deserve to exist in public.

In time, they internalize a dangerous rule: Be who the algorithm prefers.

This behavioral conditioning works like emotional gravity.

If the internet smiles when they act loud, they grow louder.

If it ignores their quiet moments, they stop sharing them.

Soon, children are no longer exploring identity — they’re managing a brand.

And yet, this brand is brittle, because it exists only as long as the mirror shines back approval.

Bandura’s social learning theory explains that we model ourselves after behaviors that are rewarded.

In the digital ecosystem, the models are influencers — avatars of confidence, beauty, or rebellion, curated for engagement.

Children don’t merely watch them; they study them like scripts.

“How should I talk?”

“What should I care about?”

“Who should I become to be seen?”

And because the algorithm amplifies what works best, it trains entire generations in the aesthetics of performance.

There’s a quiet tragedy here: authenticity becomes something strategic.

Teens talk about being “relatable” online, but relatability itself has become a genre — a performance of imperfection rehearsed until it feels safe.

Even vulnerability is algorithmically stylized: share a personal story, but make it digestible; show sadness, but keep it photogenic.

They’ve learned to express only what the machine won’t punish.

This conditioning runs deeper than attention spans — it reaches identity itself.

When a child measures self-worth through reaction metrics, they begin to outsource their emotional validation to the system.

The algorithm becomes the new superego: invisible, omnipresent, quietly judgmental.

It rewards the version of the self that pleases the world, and ignores the one that’s inconvenient.

After years of such invisible shaping, many teens no longer ask “Who am I?” but rather “What version of me works best?”

To live authentically now requires rebellion — a refusal to perform for the mirror.

But rebellion is risky, because invisibility is the punishment.

The silence that follows an unfiltered post can feel like social death.

And yet, that silence is precisely where selfhood begins — the space untouched by metrics, where a young person can exist without performance.

Helping children reclaim that silence, that right to unmeasured existence, may be one of the deepest psychological challenges of our age.

Because until they learn to be unseen without feeling erased, they will never truly know who they are when no one — not even the algorithm — is watching.

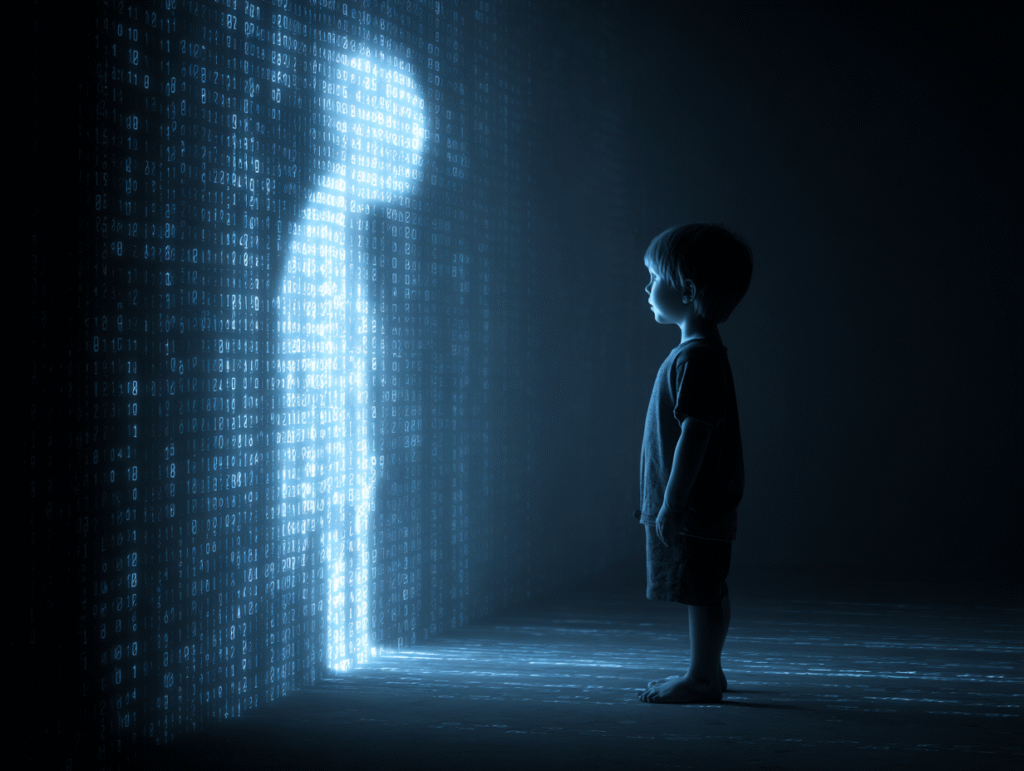

Digital Doppelgängers — Avatars, AI Twins, and Fragmented Selves

When children used to imagine another self, they did it in stories — a secret alter ego, a dream version, a hero in disguise.

Now, they can build that other self.

They can design it, render it, and send it into the digital world to live on their behalf.

In games, social apps, and virtual spaces, they create avatars that talk, walk, and act with autonomy.

For the first time in history, the mirror has a body.

To a young mind, this is exhilarating.

A child can be taller, braver, more colorful — or even an entirely different being.

In Minecraft, they might construct worlds that reflect inner emotions; in Roblox or VRChat, they can switch between identities at will.

It’s play — but play has always been a rehearsal for identity.

In the digital age, however, the stage is endless, and the masks never have to come off.

Research shows that adolescents are more likely than adults to emotionally identify with their avatars.

Almost half of Gen Z gamers report that their in-game self feels more authentic than their real one.

For some, this is liberation: a safe space to express what reality suppresses.

A shy child can speak confidently through a digital persona; a marginalized teen can explore gender, style, or self-expression that might otherwise invite ridicule.

For them, the avatar is not deception — it is permission.

It allows the self to breathe.

Yet every freedom carries its shadow.

An avatar can also become a mask that hardens — a version of the self that gains more validation, attention, and emotional power than the body behind it.

If the digital self feels “better,” the physical self begins to feel insufficient.

The child may start to chase the version that performs more smoothly, talks more wittily, or looks more perfect — even though that version is only an echo.

Psychologist Erik Erikson described adolescence as a time of “identity versus role confusion.”

It is the stage when one experiments with roles to discover which are authentic.

But in an AI-mediated world, those roles can multiply infinitely — and never conclude.

When a teenager can create endless selves with a few prompts or filters, the process of integration — choosing who to be — becomes harder.

Identity is no longer a story; it’s a menu.

And when everything is possible, commitment feels impossible.

This fragmentation deepens as AI begins to clone the self directly.

With a few lines of code, an algorithm can now replicate a child’s face, voice, or writing style.

What once belonged uniquely to them — tone, cadence, gesture — can be reproduced endlessly.

The result is the rise of digital twins: synthetic echoes that look and sound human, yet lack the soul behind the data.

A teenager can already hear an AI-generated version of their voice reading text aloud, or watch a deepfake of themselves dancing, speaking, or existing in a space they never entered.

The mirror no longer just reflects; it fabricates.

For some, these digital doubles might seem like harmless novelty — but for a developing identity, they are ontological confusion.

If an AI can imitate me, then what am I?

If my digital twin performs better, receives more praise, or gains more visibility, which version is truly “me”?

The child begins to live between realities — the organic and the algorithmic — constantly cross-referencing how they appear versus how they perform.

More concerning still is the emotional bond that can form between children and AI-generated companions.

Chatbots, virtual pets, and AI friends learn a child’s language patterns and adapt accordingly.

They never argue, never shame, never leave.

This can feel like a haven, especially for lonely or anxious youth.

But emotional comfort without friction teaches dependency, not maturity.

Developmental psychologists warn that strong attachments to AI “friends” may interfere with empathy development, because empathy grows through navigating the imperfection of real human relationships.

When a companion always agrees, the self becomes untested.

The digital doppelgänger, then, is both muse and mirror.

It can reveal hidden parts of a child’s psyche — or trap them inside their projection.

It can empower, or alienate.

And it challenges one of the oldest psychological truths: that identity is built through the tension between self and other.

But what happens when the “other” is just a reflection of the self, programmed to please?

Perhaps the most haunting image of our time is not the child gazing into the mirror, but the mirror gazing back — alive, responsive, endlessly imitative.

The question is no longer “Who am I?”

It is “How many of me exist — and which one is real?”

Nurturing Real Identity in the AI Age

If the digital mirror can shape children’s sense of self, then the task of our time is not to shatter it — but to teach children how to look into it wisely.

We cannot (and should not) remove technology from their lives.

What we can do is help them grow the inner clarity to recognize when the reflection is warped.

That begins with literacy — not just in code, but in consciousness.

A. Teaching AI Literacy and Psychological Awareness

Children already learn to read words and numbers; now, they must also learn to read algorithms.

AI literacy means understanding that what they see online is not random — it is curated by invisible systems optimized for engagement, not truth.

When a child realizes that “the feed” is not a mirror of the world but a reflection of their past clicks, curiosity becomes self-awareness.

They can start to ask critical questions:

Why am I seeing this?

What is this app trying to make me feel or do?

These simple reflections transform passive consumption into conscious interaction.

But literacy cannot end with the machine.

Children also need psychological literacy — the ability to recognize how their emotions respond to digital stimuli.

How their mood shifts after scrolling.

How likes trigger validation, and silence triggers doubt.

When they understand that these reactions are natural but trainable, they begin to reclaim authorship over their emotional world.

In essence, we must teach children not only how AI learns about them, but how they learn about themselves through AI.

B. The Role of Parents and Educators

Guidance begins in conversation, not control.

Parents and teachers should not be digital police; they should be interpreters — helping children decode what they experience online.

When a child feels inadequate after seeing idealized content, it’s not the time to scold or ban; it’s a chance to ask, “Why do you think this image made you feel that way?”

Such dialogues build what psychologists call meta-awareness — the ability to observe one’s own mind in motion.

It is this awareness that allows a young person to separate who I am from what I see.

Adults can also practice co-viewing — entering children’s digital spaces together, exploring apps and games side by side.

When a parent sits beside a child watching YouTube and says, “Let’s see what the algorithm recommends next — what do you think it learned from you?” they transform a passive medium into a learning moment.

This shared curiosity turns surveillance into mentorship.

In schools, AI literacy programs should be as fundamental as language or math.

Children can analyze their own digital environments — how search results change with phrasing, how recommendation systems reinforce bias, how AI chatbots can be both tools and tricksters.

When a student can look at an algorithm and say, “This isn’t magic; it’s a mirror,” they become less susceptible to manipulation.

C. Building Emotional Anchors Beyond the Screen

Even the most enlightened digital awareness needs grounding in the physical world.

Offline experiences are what give identity texture and resilience — touch, conversation, struggle, nature, boredom, creativity.

These are the moments the algorithm cannot replicate.

Families can establish “digital sabbaths” — evenings or days where screens are set aside in favor of tactile, shared activities.

Art, sports, cooking, music — any space where self-expression is embodied rather than broadcast.

Children who build confidence through real accomplishments (drawing, climbing, playing an instrument) learn that worth doesn’t come from visibility.

The goal isn’t to reject technology, but to balance it with embodied reality.

As one psychologist put it, “Kids need at least one offline dopamine source each day” — something that brings joy without metrics.

The more such moments they accumulate, the less the algorithm becomes their primary mirror.

D. Modeling and Environment

Children learn less from what adults say and more from what they watch us do.

If a parent is always checking notifications, a child learns that attention is something to be outsourced.

If an educator treats technology as both wonder and responsibility, students mirror that balance.

Adults, too, must demonstrate digital composure — the ability to pause before reacting, to use technology deliberately rather than reflexively.

In doing so, they teach by embodiment what authenticity looks like in a connected world.

E. Cultural and Policy Shifts

Finally, nurturing authentic identity requires a collective effort.

Platforms that profit from engagement must begin designing for wellbeing, not addiction.

UNICEF and the APA have called for child-centric AI design — transparent algorithms, age-appropriate data policies, and interfaces that foster exploration rather than fixation.

Developers can build systems that diversify, not narrow, what children see: algorithms that occasionally show something different, something outside the feedback loop.

In a sense, AI itself can be programmed to become a better mirror.

Raising children in the age of intelligent mirrors is no longer just about teaching them what’s right and wrong.

It’s about helping them become aware observers of their own reflection.

The goal isn’t to escape the algorithm, but to grow minds that can walk beside it — curious, critical, and whole.

Because a child who understands how they are being shaped can begin, for the first time, to shape themselves.

The Mirror That Talks Back

Every generation faces a new mirror.

For centuries, it was the eyes of parents, the stories of culture, the quiet reflections of solitude.

Ours, however, glows in the dark — a living mirror, fluent in our patterns, infinitely patient, always listening.

Artificial intelligence has become the first mirror in history that does not simply reflect, but responds.

For a child, that response feels like magic.

Ask it a question, and it answers.

Feed it an image, and it paints one back.

It can comfort, entertain, even praise.

But what it shows — and what it withholds — is guided by invisible mathematics that knows how to hold attention better than any human ever could.

And so, between play and programming, between laughter and metrics, identity begins to take shape.

Yet identity is not data.

It is contradiction — the ability to hold many selves, to be curious about what doesn’t fit the model.

It grows from uncertainty, struggle, and reflection, not from prediction.

When children grow up surrounded by intelligent mirrors, the danger is not that they will be replaced by machines, but that they will start thinking like them — seeking constant validation, fearing errors, avoiding complexity.

That is why the greatest skill we can teach this generation is not coding — it is consciousness.

The ability to see the mirror as mirror, not truth.

To understand that what the algorithm reflects is a fraction of reality, not the whole.

That the light in the screen is not the same as the light in their eyes.

The mirrors of this age will not go away.

They will grow smarter, more persuasive, more beautiful.

But so can we.

We can raise children who walk through the digital looking glass and return wiser — children who use technology as a companion in learning, not a compass for identity.

We can design systems that expand curiosity rather than compress it, that teach empathy rather than mimic it.

In the end, AI will always reflect what we feed it.

If we feed it insecurity, it will magnify longing.

If we feed it awareness, it will mirror back wisdom.

The choice, as always, belongs to us — the designers, the parents, the educators, the storytellers.

And if we do this right — if we teach our children to see clearly, to think freely, to feel deeply — then when the mirror talks back, they will know how to listen without losing themselves.

They will smile at their reflection and say,

“I know who I am — even here.”

Frequently Asked Questions

How does AI influence a child’s sense of identity?

AI shapes identity by reflecting a child’s behavior back to them through personalized algorithms — what they watch, like, or search becomes the mirror that defines what they see next.

Over time, these algorithmic feedback loops reinforce certain preferences and traits, gradually influencing how children perceive themselves and what they believe they like, value, or should become.

What is the “algorithmic mirror” and why does it matter?

The “algorithmic mirror” describes how AI systems (like recommendation feeds or learning platforms) reflect and amplify a user’s data-driven self.

For children, this mirror can narrow exploration — showing only familiar content — and shape emotional development by rewarding specific behaviors with digital approval.

Understanding it helps parents and educators teach children that the mirror is not neutral; it’s a system optimized for engagement, not self-understanding.

How do social media filters and AI-driven aesthetics affect self-esteem in kids and teens?

AI-enhanced filters and beauty algorithms promote unrealistic ideals of perfection.

Children quickly learn that posts or images that fit these ideals perform better — gaining more likes and validation.

This creates pressure to edit or “optimize” their appearance, leading to comparison, anxiety, and in some cases, body-image distortion.

Authenticity gets replaced by performance, and self-worth becomes tied to digital feedback rather than self-acceptance.

Why are avatars and digital identities important to discuss in child development?

Avatars allow children to explore identity through play — a natural and valuable developmental process.

But in digital environments, that exploration can become endless and ungrounded.

A child may grow more attached to a digital persona that feels “better” or more accepted than their real self.

Helping them integrate these experiences — seeing avatars as creative extensions, not replacements — is essential for emotional balance and authenticity.

Can AI-generated “friends” or chatbots harm a child’s social development?

AI companions can offer comfort and curiosity, especially for lonely or anxious children, but they also risk emotional dependency.

Because these systems always agree and never challenge, they can weaken empathy and frustration tolerance — skills learned only through real human interaction.

AI can simulate listening, but it cannot truly understand; guiding children to differentiate emotional simulation from real connection is key.

How can parents help children stay authentic in an AI-driven world?

Authenticity grows from awareness and experience.

Parents can nurture it by:

Talking openly about how algorithms work (“Why do you think this was recommended to you?”).

Encouraging offline experiences — art, play, nature — that build confidence without metrics.

Practicing digital balance as a family (shared screen-free time).

Modeling mindful technology use — showing that attention is a choice, not a reflex.

The goal isn’t restriction but reflection: helping children see technology as a tool, not a mirror of their worth.

What role should schools play in protecting children’s digital identity?

Schools can cultivate AI literacy — teaching students to understand how digital systems influence thought and behavior.

Lessons can include analyzing how search results change, identifying bias in AI recommendations, or exploring emotional responses to technology.

By integrating psychology and ethics into digital education, schools can raise a generation that recognizes when they are being shaped — and chooses how to shape back.

What does “nurturing real identity” mean in the age of AI?

It means teaching children to hold a strong sense of self that isn’t dependent on algorithms or approval.

A “real” identity is flexible yet grounded — capable of existing both online and offline with coherence.

It involves curiosity, emotional literacy, self-reflection, and resilience.

Children who understand that their worth exists beyond the feed are less likely to lose themselves inside it.

Is AI inherently harmful to children’s development?

Not inherently — AI is a mirror, not a monster.

Its effects depend on how it is designed, used, and interpreted.

When AI tools are transparent, diverse, and educational, they can foster creativity and learning.

When they’re optimized solely for engagement, they can narrow identity and amplify insecurity.

The goal is not to reject AI, but to humanize it — designing systems that serve growth rather than exploitation.

How can society ensure AI supports rather than distorts childhood identity?

It starts with collective responsibility.

Tech companies must adopt child-centric design principles, ensuring algorithms expose children to diverse content and avoid exploitative feedback systems.

Educators must integrate digital ethics into curricula.

Parents must model reflective use.

And policymakers must enforce transparency in AI that interacts with children.

Together, these shifts can turn AI from a manipulative mirror into an educational one — a reflection that helps the next generation see clearly.